In the quest to win in LLM results, the humble FAQ is back in the limelight. Is that really all we’re gonna get?

It feels like, not so long ago, the FAQ was nearly an extinct species in the world of web content. At least, I felt like I was seeing it less.

SEO’s Wild West days were over. Getting found had gone from a game of keywords to one of backlinks, authority, and an enigmatic mandate to make your content easy, useful, and relevant to the users. (Keywords were still useful but, like, not as much.)

The FAQ was a casualty of this shift and many others. They were places to fill with rich content, full of juicy keywords. And they were useful, dammit! But, for some reason, big blocks of informative text started to feel old-school, like your grandpa’s internet.

At the same time, skinny little phone screens transformed web product writing into a sport of minimalism, not explanation. Not to mention, the front page of Google became (and remains) pay-to-play, eroding the value of ranking highly.

But now, LLMs have arrived. And while claims of them transforming everything from dieting to defense are probably a bit overblown, one shift is true: they have completely flipped the script around findability on the web. And they may just be the second wind FAQs were waiting for all this time.

Pondering the FAQ of tomorrow.

FAQs are about to have a moment.

What keywords are for search, questions are for LLMs. They listen attentively as you stumble your way through your next, most nuanced query:

Can you help me find the perfect gift for my dad? — 3 days before Father’s Day

Whats the bes t hotle in Orladno? — 4:34am on a Tuesday

Are artificial diamonds ethical? — Just watched Blood Diamond

What they do next seems to be different than anything our search engines have ever done. Those engines look for relevant results in their big, fat databases based on keywords, location, and user intent. LLMs go and retrieve answers from… everywhere. They measure validity, run sub-queries, and look near and far to find the best answer to your question.

Naturally, the best place to find answers is alongside questions, their precursor. Accordingly, FAQ content appears to perform quite well if you’re trying to show up in ChatGPT, Claude, or whatever will be out by the time this is written. It makes sense:

FAQs reflect how people ask and answer questions. So do LLMs.

FAQs are highly structured, which basically all web-crawling engines prefer.

FAQs tend to be robust in content, which gives LLMs — platforms judged by the merit and value of their responses — more to come back to you with.

I believe the desire to get seen in LLM results is going to make the FAQ the most important content module out there.

The thing is, FAQs kind of suck.

I’ve never liked FAQs. They presumably take the things people actually want to know, strip it of all its context, and jam it at the bottom of the page where no one will ever go. It’s the UX equivalent of saying, “Let me finish, and then I’ll answer your questions.”

Resurrecting them is not the answer. Not how we know them, at least: the giant accordion that lurks just above the footer, hoping someone will read it. It’s time for something better.

The purpose of this experiment is to explore what the FAQ could be in this next chapter, and how it can work for LLMs while working harder for us humans, too.

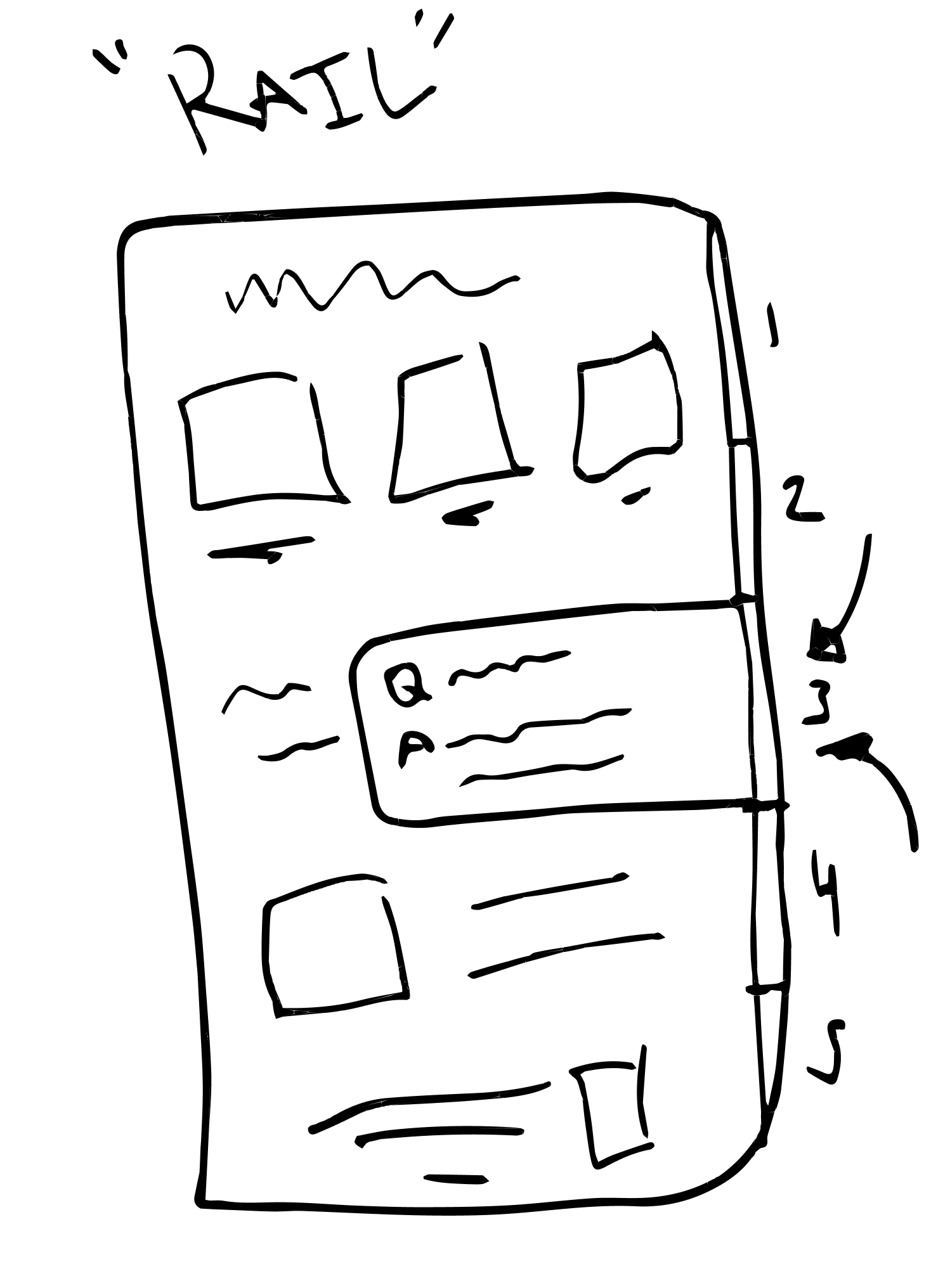

Concept 1: The Rail

Dispersing the FAQ in the service of context.

Start with an issue I raised above: context.

FAQs remove answers from the immediate context of a question. You read something, you have a question, and you get the answer later.

It’s not their fault; they do this in service of an arguable strength, which is consolidation. There’s a real case to be made that having one place where you know can find answers is a good thing. But there’s a subtext, too: that you better hold onto those questions until the “answer moment.”

Confusion doesn’t exist in a vacuum — it distorts the whole picture. The longer we spend time with it, the more it mutates us. So, how do we attack confusion in the moment? Could we make a consolidated module that is also more contextual?

To do that, you’d need to be able to follow someone through any part of their context experience. There is a digital fixture that essentially does this already: the vertical scrollbar. It is the web’s most ubiquitous context indicator, orienting you on a page and telling you how close — or not — you are to the end.

It got me wondering if a similar kind of “rail” could be an answer for an FAQ alternative.

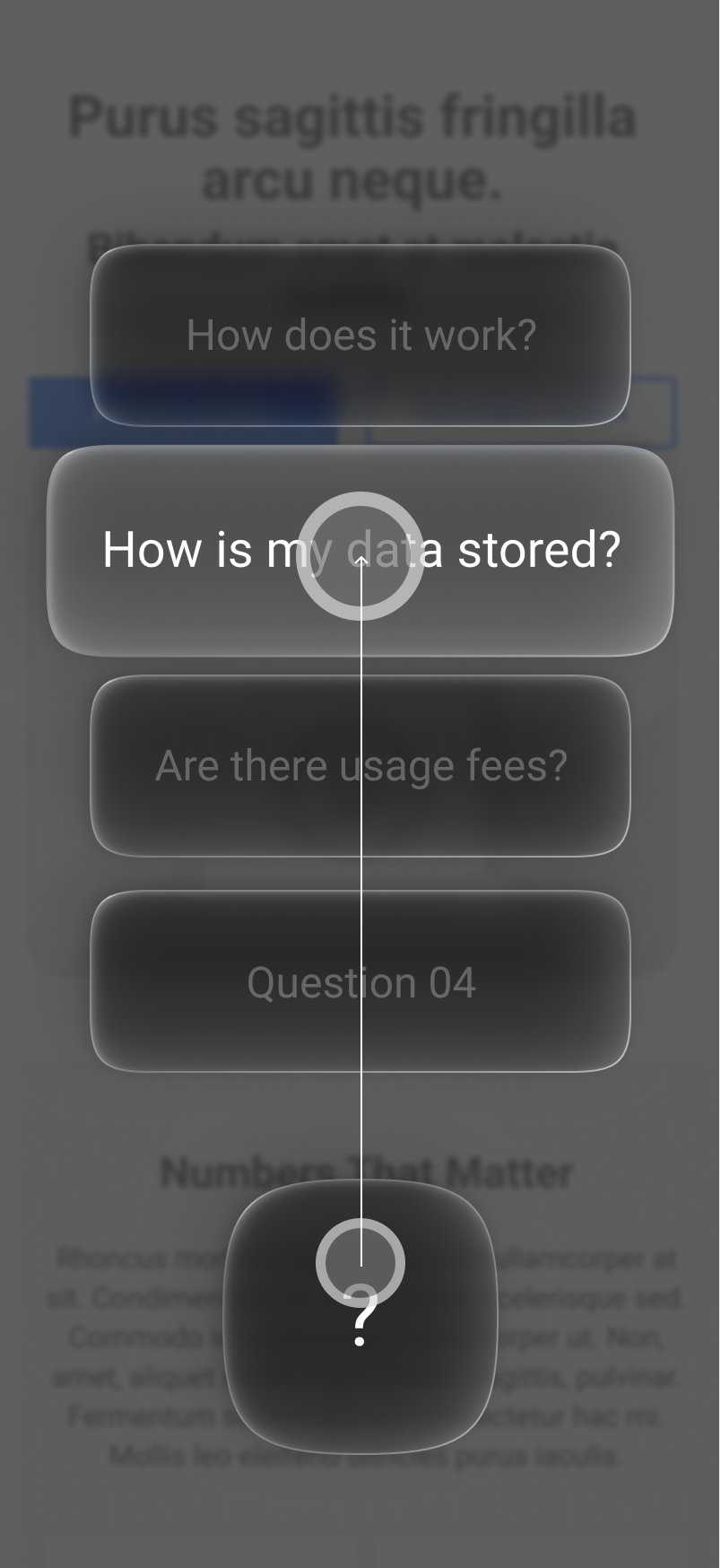

The idea that emerged is “The Rail” — a segmented FAQ that can be interacted with as content is consumed. Picture this:

You read a page, and reach a moment of confusion

You interact with the rail, because that’s where you get answers now

An overlay appears, provides information tailored to where you are on the page

You close it and get back to reading, no longer confused

The benefits include:

Less time confused and a clearer picture, sooner

Less time scrolling back and forth between what you’re reading and an FAQ

And LLMs like it because:

It potentially supports a greater quantity of content than your standard FAQ since it’s elegantly dispersed, which gives LLMs more to pull from.

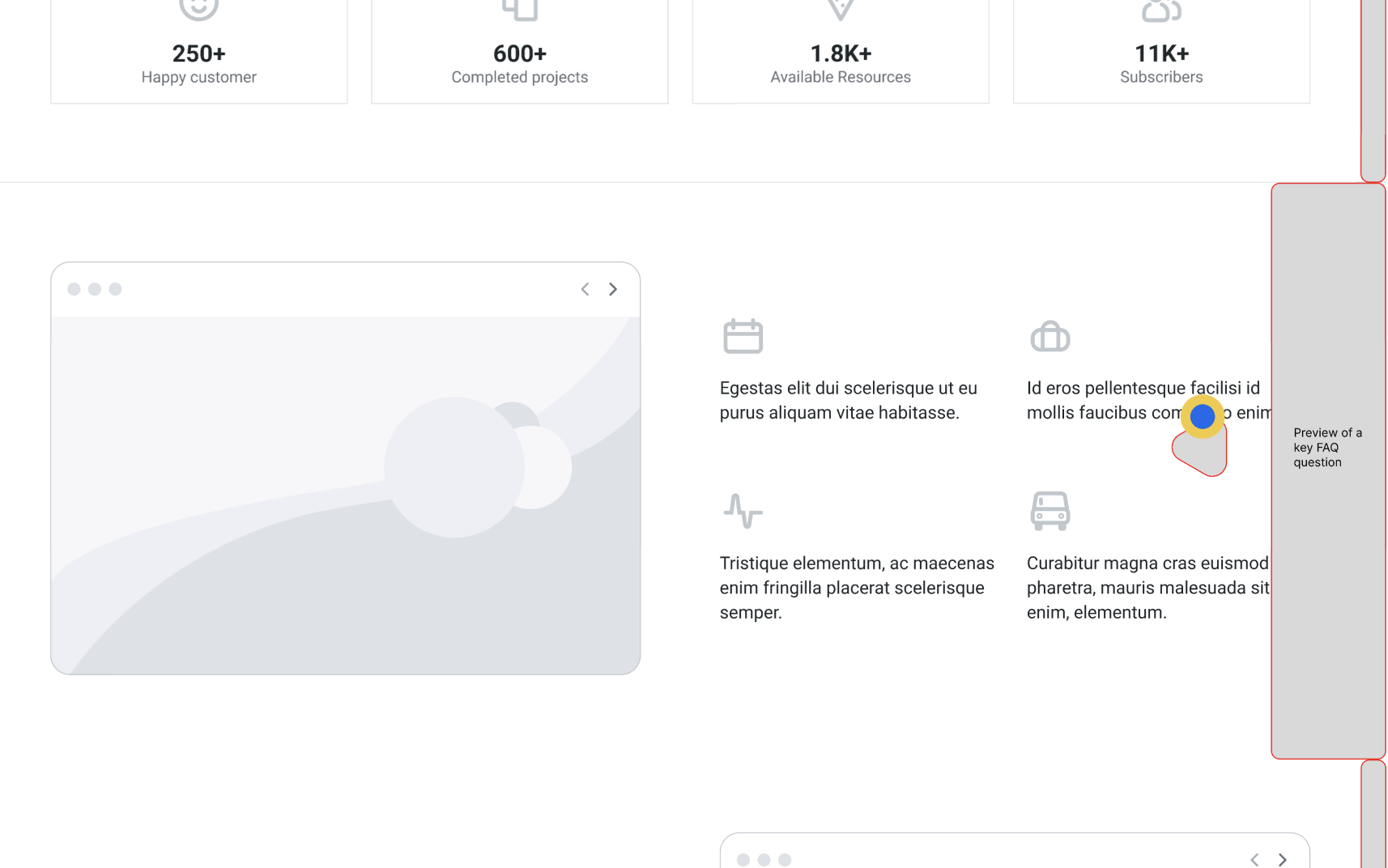

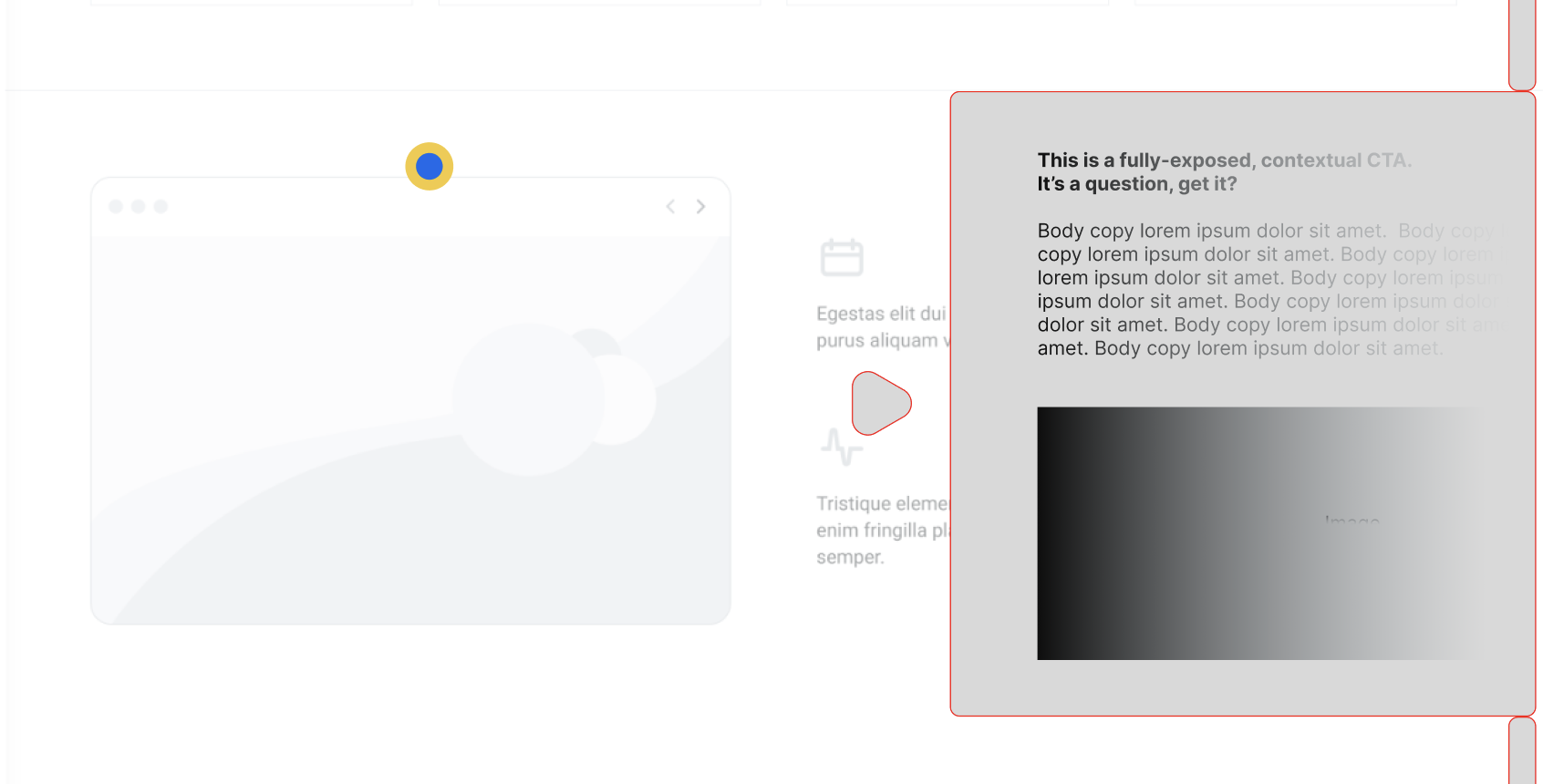

Rapid prototyping

I wired this functionality out in Figma and took it into Claude Code to create a rapid prototype of The Rail.

In Figma

Coded prototype

The results are interesting. This is by no means a UX revelation, but having the content I need within arm’s reach does feel nicely practical. This could also be an interesting front-end for an AI agent when something like a floating pill isn’t possible.

This method also seems quite compatible with packing in a lot of information, a potential win for a banking or government site where compliance demands having a lot of usually ugly copy.

There are rough edges, too. Segmenting logic means smaller / more narrow modules tend to work better, which means immersive sites with lots of full-bleed media probably aren’t going to love this solution. Mobile is not something I’ll explore here, but it surely begs a few additional questions.

Download the HTML and try it yourself: The Rail Prototype

Concept 2: The Radius

Swipe to know. Then keep learning. Accordion free.

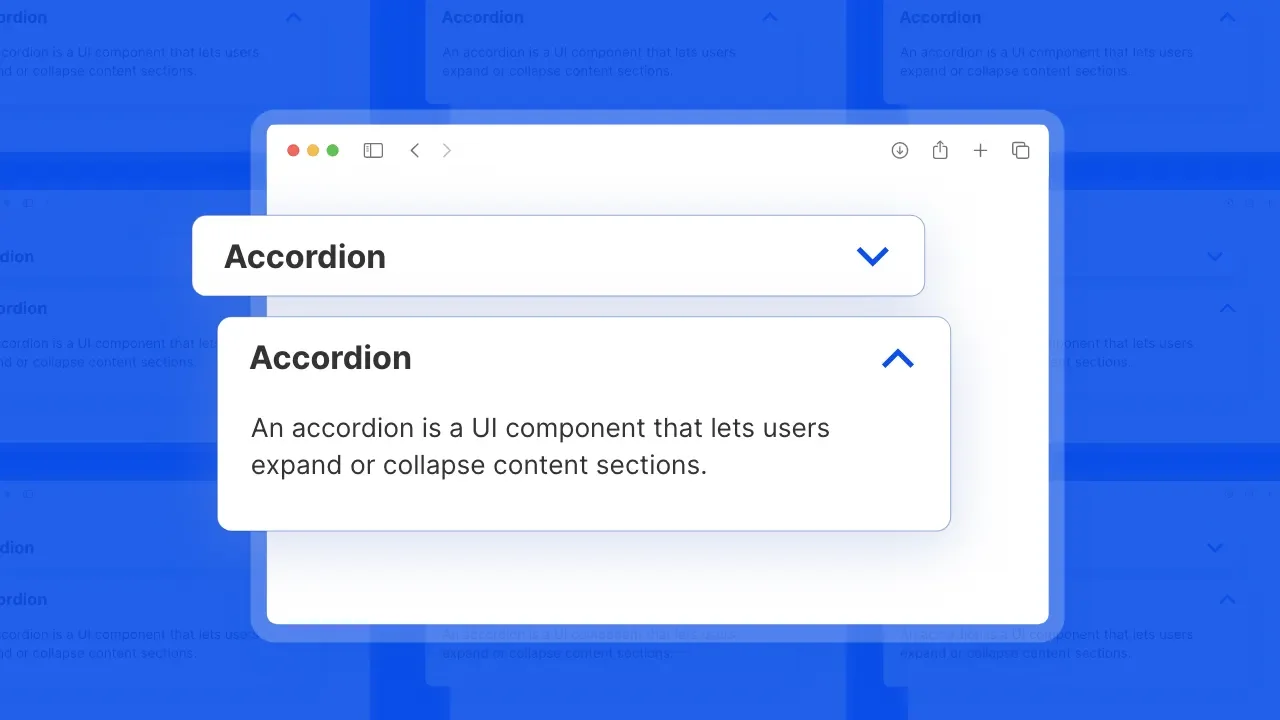

Midway through this exploration, I texted my brother about tomorrow’s FAQ. He quickly identified something that has irked me about FAQs for a long time: the accordion menu. No UI convention is more essential to the proliferation of the FAQ than the accordion, and you better believe it’s being dropped on more sites than ever, right now.

Accordion menus are real pixel bullies. Open one drawer, and everything else makes way for it. This makes accordions a total mess on mobile once the contents are more than like… a paragraph. Often, good answers are.

But he also was a believer that consolidation was a strength, and that having your answers in one place was a good thing. So the question was, how you could consolidate everything in one go, but make it seamless and solve the challenges of the age-old accordion?

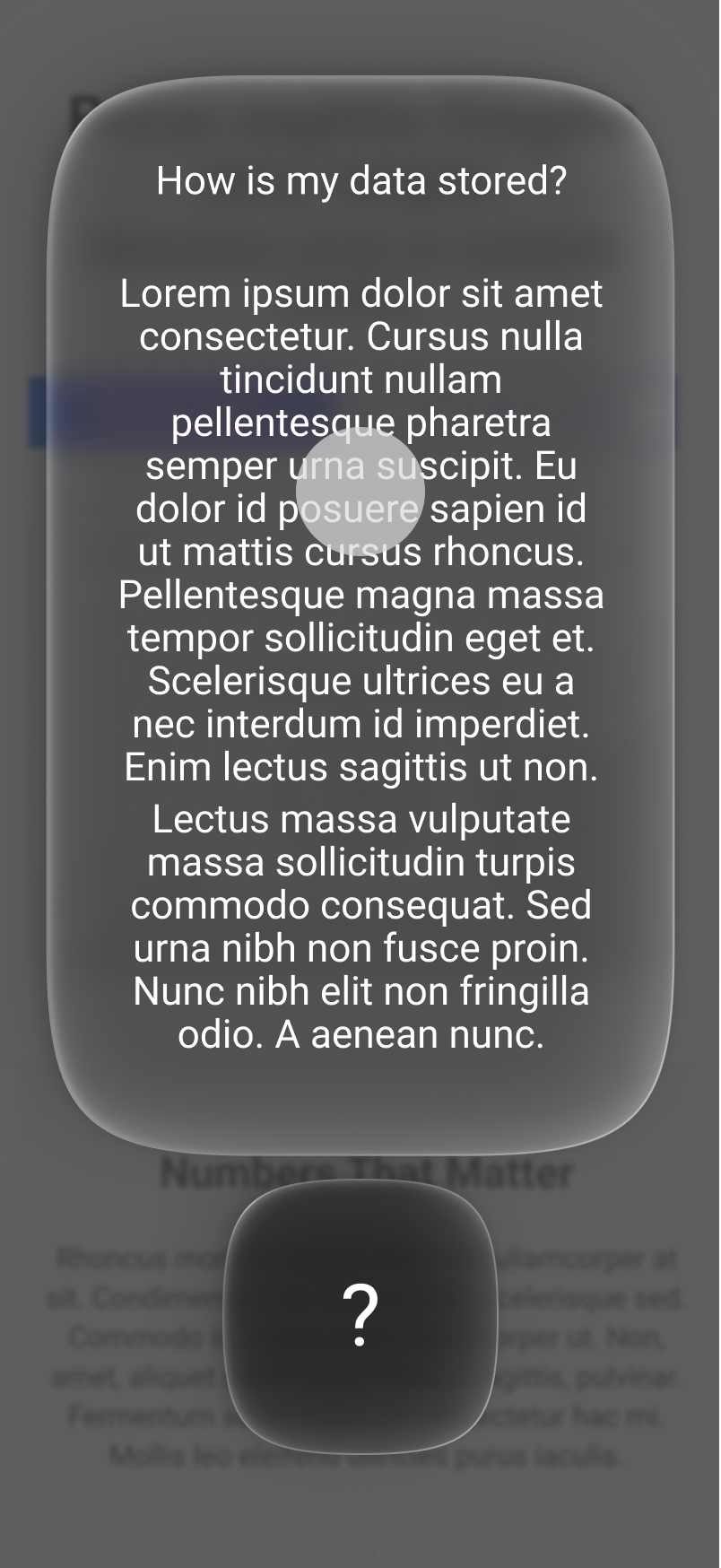

He quickly followed up with a wireframe for a “Swipe-Up FAQ”.

One floating pill, made to go where you go. I liked this. The Rail was locked to the content on the page, beholden to its structure. But this concept could travel anywhere. It was portable.

I also like this a lot more than just plopping down a generic “Ask me anything” prompt on the page, which is becoming more and more common. This skips the fanfare of having to type, ask, or query — not to mention we don’t always know the right questions to ask. Here, you get right to the important questions.

The benefits include:

A consolidated support center that goes anywhere and everywhere

Mobile-friendly like accordion-style FAQs will never be

A more seamless experience versus something like an LLM prompt / widget

And LLMs like it because:

As you’ll see in our prototype, this can support a ton of highly structured content. That’s all the more to get crawled, ranked, and served back up.

Rapid prototyping

I took this wire into Claude and created a prototype of what I now lovingly call The Radius. In doing this, I also added some new functionality, including question themes, which make it easier to navigate.

When evaluating a concept like this, I ask myself: does this make it easier to learn? Or more fun? And for me, this does both. The radius format evokes the flow of thinking, and gets me wondering about a more robust feature where common questions are chained together — or generated procedurally as the user learns.

Plus, if The Rail saved space, The Radius eliminates the issue of space completely. You can fit a massive amount of content into this format. When you consider that you might have variations on this module by page or even your location on the page… you’re talking about a real content container to end all content containers.

Download the HTML and try it yourself: The Radius Prototype

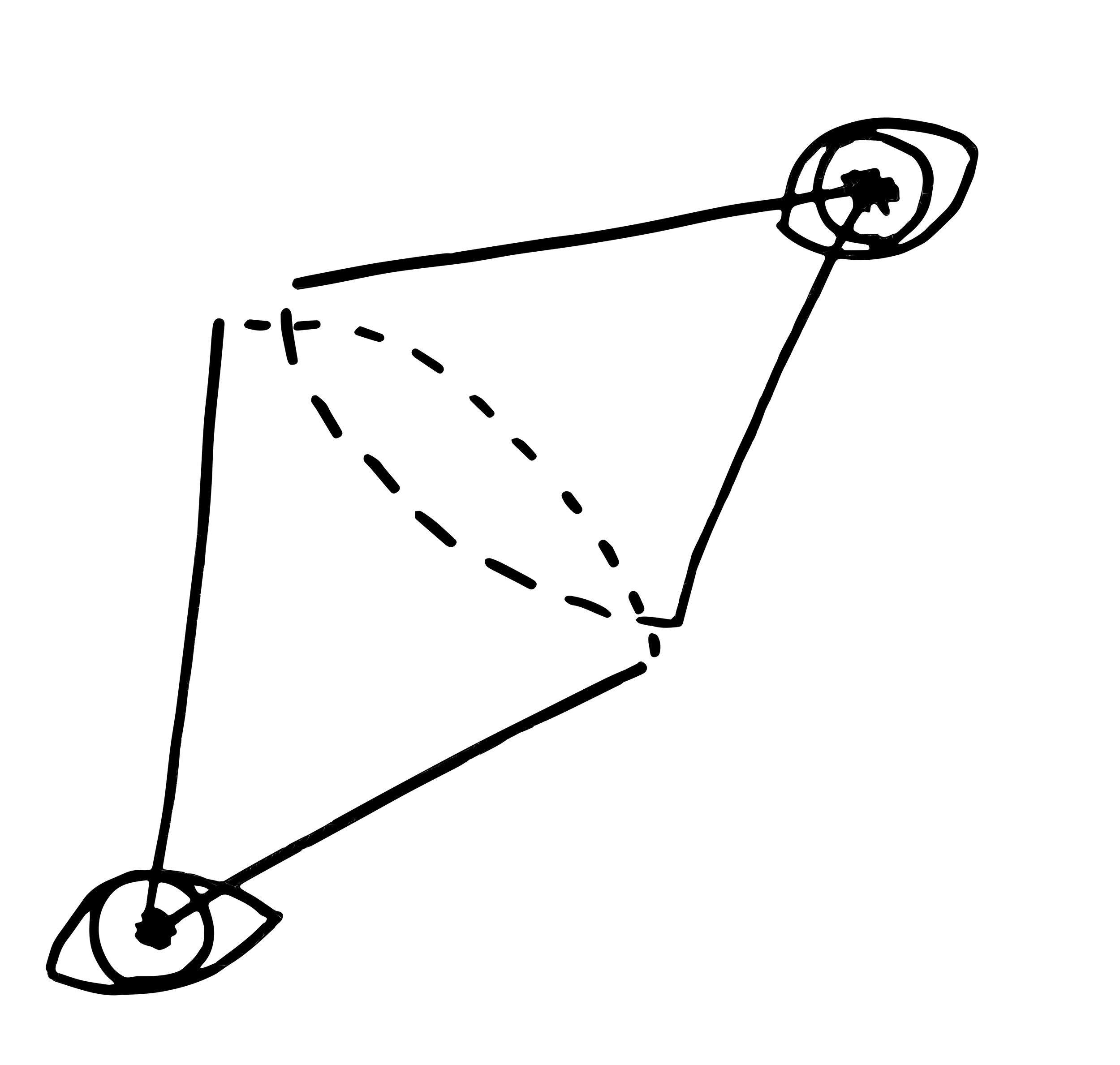

Concept 3: The Inspector

An FAQ magnifying glass for… anything?

From an experience POV, FAQs are a bit of a leap of faith.

You have to trust that your page author has the nuanced point of view to include the right questions for the average reader — including yours. There is nothing more bone-breakingly annoying than getting to an FAQ only to find that your totally reasonable question isn’t accounted for. This is a critique that extends to both of the concepts above as well: the Rail is a system for one question at a time; the Radius is a larger collection, but a collection nonetheless.

Obviously, an ever-present AI chat-bot is a solution to this challenge that is increasingly viable today. But can I level with you for a moment? I’m so tired of typing out questions. And what’s more, FAQs should answer questions you have and those you weren’t smart enough to have. So a chat bot… it isn’t really doing it for me.

So, how do you ensure you have exactly the right FAQ questions in as many scenarios as possible? I started imagining a magnifying glass that I could point anywhere on a digital experience — one that reveals the FAQ questions that matter most.

Rapid prototyping

The benefit of this, in my mind, is total contextual freedom. Finally, an FAQ that goes anywhere and illuminates everything. I took these ideas into Claude and guided that sweet little rascal to create a rapid prototype for this functionality.

This convention has had a few names: the looker, the ouija, the dropper. But ultimately, I call it The Inspector.

The result is not just informative, but curious. You read something. You wonder about it. You drag, drop, and dive deeper. Maybe I didn’t make an FAQ? Maybe I made a rabbit-hole generator?

The benefits include:

You can basically always count on having exactly the FAQ questions you want

Highly contextual; get information on a single paragraph

Actually a little bit fun, like a point-and-click experience in your browser

And LLMs like it because:

Well.. will they? I question whether this solution is totally possible (or scalable) without the mighty power of LLMs, and if that content is being generated in real-time, then it’s likely not being crawled by AI. So, this solution may be effective for certain things, but it might not ultimately solve the challenge that is resurrecting the FAQ in the first place: we need more structured content to grip the attention of AI crawlers.

Download the HTML and try it yourself: Inspector Prototype

The experiments here are likely not UX revelations, and I’m sure some of them already exist, to varying degrees, in the market today. If that’s the case, send them my way. If you have an idea of what the FAQ of the future looks like, send it my way, too. Or go make it yourself, then send it my way. It’s on us to demand (and create) a better experience online.

And I suppose that brings us to the “big picture” moment.

For copywriters and content folks like me, the challenge of the future will not be clinging frightfully to our jobs. It will be taste and ingenuity. We will change how we write, and we’ll change again a year later. But we’ll write it good. And when people try to make websites into FAQ soups just to win the LLM race, we’ll need to steer them towards a happy medium. And when everything breaks, we need to be ready to build it again in a way that works for the story and the machines alike. Our collective mission for the next decade is to save great content.

Final Thoughts

In a lot of ways, AI smacks of the heyday of SEO. We’re in a new Wild West era, and it’s hard to say how LLMs will work next week, let alone next year. That said, I’m happy for the FAQ: an old paradigm enjoying new life.